The newest AI fraud story—the place Murphy Campbell’s voice is cloned and her recordings are falsely claimed—has triggered a well-recognized reflex: Why didn’t the artist register? Why wasn’t the system capable of detect it? It’s the artist’s fault.

That intuition is backwards.

What we’re seeing just isn’t an artist compliance failure. It’s the predictable results of a system constructed to ingest first, confirm later, and distribute cash in between. AI has merely uncovered how fragile that mannequin all the time was.

The present fallback—“artists ought to register with ACR programs”—doesn’t resolve that downside. It reframes it. It shifts duty downstream, onto the very individuals who have the least management over what enters the system within the first place. As a result of relating to platforms, the protected harbor regime has taught them one lesson that they’ve discovered very effectively: Any wrongdoing is all the time another person’s fault.

On Kafka’s Web, if ACR goes to be handled as a safeguard to guard platforms from themselves, then the burden has to flip.

Spotify tells artists they’ve to make use of certainly one of a particular group of distributors that play ball with Spotify. If a distributor needs to add a recording that’s not already in an ACR database, the primary query shouldn’t be whether or not the artist registered it. The query ought to be: the place did this monitor come from? Who created it? What’s the chain of title? What rights are being asserted? As a result of as Spotify tells us, these distributors are the adults within the room and clear every little thing, proper? Flawed.

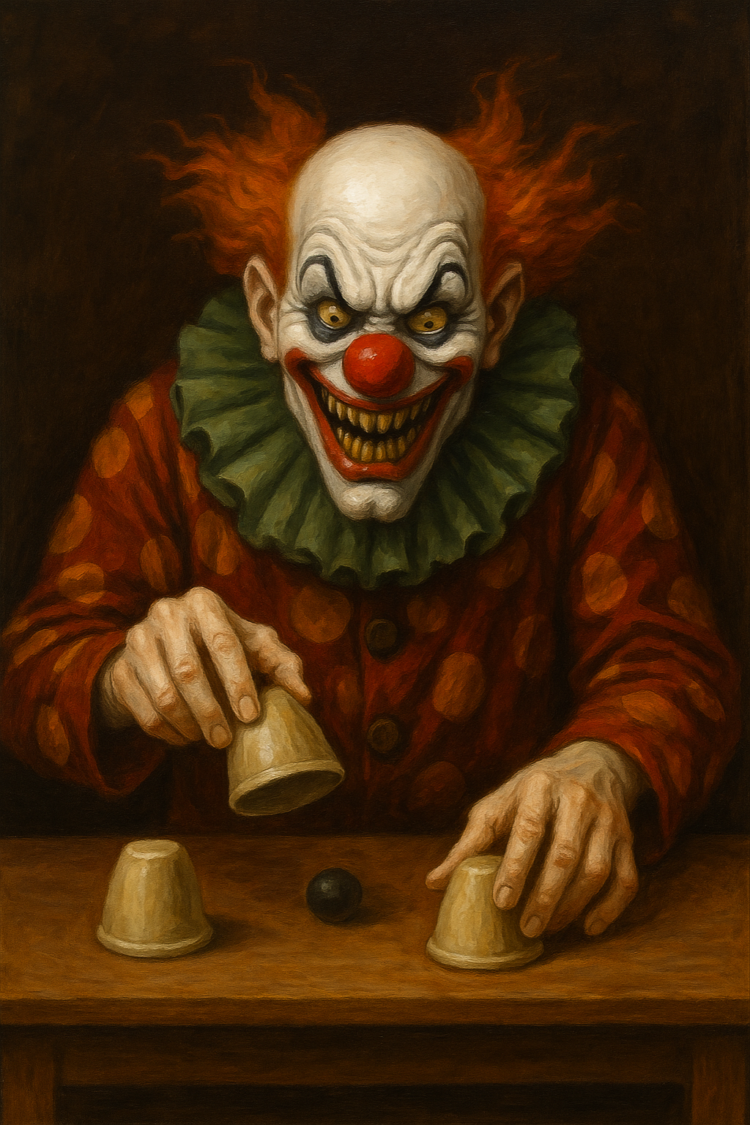

If a monitor is being entered into an ACR database for the primary time, then whoever maintains that database should know their buyer. In any other case, what’s being verified? An ACR system that accepts unidentified inputs just isn’t defending something. It’s merely recording claims—together with fraudulent ones—and giving them a flimsy veneer of legitimacy. At that time, “registration” turns into a race, and whoever uploads first can form the information file.

That isn’t a safeguard. It’s a laundering mechanism. When you see that clearly, duty turns into tougher to dodge. It’s truly worse than a quitclaim—it’s truly a wager you wouldn’t absorb Vegas.

If a distributor pushes a fraudulent or unauthorized monitor into the system, and the platform permits it to go dwell with out significant verification, then at a minimal the distributor and the platform are collectively accountable for that monitor being there within the first place. The hurt doesn’t start when the artist complains. It begins at ingestion.

However the issue doesn’t cease there.

When a platform takes that monitor—whether or not it’s a clone or just misattributed—and locations it on the artist’s web page, that’s now not passive ingestion. That’s an impartial, intervening act. The platform isn’t just internet hosting content material. It’s making an affirmative determination about id, attribution, and affiliation.

That call carries its personal duty. And additionally it is the best downside in your entire system to repair. Don’t enable any monitor to seem on an artist’s web page with out that artist’s consent. No consent, no placement. It’s a easy rule, and it could eradicate an infinite quantity of downstream hurt.

You’d assume platforms would need that permission. In any case, the artist web page isn’t just a listing—it’s the core id layer of the service, the place the place fame, discovery, and monetization converge. Treating it as an open floor that anybody can write to just isn’t a technical limitation. It’s a design selection.

Extra broadly, all of those points level again to the identical underlying actuality: platforms have optimized for velocity over certainty. That isn’t an accident. It displays a set of incentives that reward quantity, progress, and frictionless ingestion whereas treating verification as a value heart. In a system like that, the quickest actor wins—whether or not the content material is professional or not.

The explanation that mannequin persists is straightforward: platforms get away with it.

They function inside authorized frameworks—whether or not below the DMCA, Part 230, or related doctrines—that had been constructed for a distinct web. These regimes assumed passive intermediaries coping with user-generated content material at human scale. What we now have as a substitute are programs that ingest, classify, attribute, and monetize content material at industrial scale—whereas nonetheless invoking the language of passivity relating to duty.

Even other than formal protected harbors, there’s a sensible barrier: platforms are terribly troublesome to carry accountable with out litigation. And litigation is pricey. The asymmetry is structural. The identical programs that enable questionable content material to be uploaded and monetized generate the income that funds the protection when these practices are challenged. By the point a dispute is resolved—if it ever is—the cash has already moved.

That dynamic creates a suggestions loop. Velocity and scale generate extra content material. Extra content material generates extra income. That income reinforces the platform’s means to withstand scrutiny and soak up authorized danger. In the meantime, the prices—misattribution, fraud, id misuse—are distributed throughout hundreds of creators.

Or, as Stalin stated, amount has a top quality all its personal.

On this context, that “high quality” just isn’t innovation. It’s insulation. The sheer quantity of content material makes verification tougher, enforcement slower, and accountability extra diffuse. And since the system is designed to favor ingestion over certainty, these outcomes usually are not anomalies—they’re options.

It’s an unvirtuous circle.

And till platforms are required to internalize the price of getting it improper—till they decelerate, confirm what they’re doing, and take duty for what they publish and pay—the default will stay the identical: Shoot, prepared, purpose. Publish first, ask questions later, and let another person bear the results. Which is why “register with ACR” just isn’t an answer any greater than register for AI decide out.

It’s a smokescreen.